I have written multiple times recently about the perils of market research and a recent article by Kristen Berman and Dr. June John, [Don’t] Listen to Your Customer, builds on these perils. Berman and John write, “a sole reliance on customer input and feedback is built on an antiquated model of human decision making that assumes humans are rational,” and time again we see that people’s decision are often not driven by optimizing utility. Thus, talking to people and understanding their desires and aspirations to design products fails because most people do not know their desires and aspirations.

People do not answer accurately

The core problem with market research is you do not get true answers. In research done by Berman and John, they found that a small minority answered a question correctly about their own intent and importance. They asked people why they were or were not saving for retirement. 86 percent of people responded that saving for retirement was important. That response conflicted with the reality that in a typical company (without automatic opt-in), less than 50 percent of people would sign up for the retirement program. The authors write, “[t]he real reason people save for retirement or (don’t save) is because they’ve been defaulted into a savings plan. Companies who automatically enroll employees in a retirement savings plan increase savings rates by 50 percentage points. It has nothing to do with a preference for the future or not having enough money.”

Data is both inaccurate but also does not reflect motivators

Not only do people provide a response inconsistent with their actions, they often do not understand underlying causes of their behavior. In the retirement plan example, Berman and John followed up by asking why they had not enrolled in their company plan. Although data showed the key driver was whether it was or was not an opt-in plan, only 6.2 percent of respondents cited anything related to form design or default enrollment.

Basing decisions on these responses could have ruinous results. As the authors point out, “if we relied solely on data from these traditional qualitative and quantitative research methods, we would likely spend a lot of time and money building solutions that would miss the mark, and for sure be less effective than just designing a default enrollment.”

People are not lying

It is not that people are trying to mislead you when they respond inaccurately, the fundamental issue is that we are not conscious about the mental processes leading to decisions. We are also not cognizant that we do not know our own decision making processes.

Another study cited in the article showed over 70 percent of people did not know the underlying reason for a decision they made. Berman and John asked people if a default setting for organ donation and retirement savings would impact their choice. Despite data that shows such defaults nearly doubles the chance of someone opting in, 71 percent of people who said the default would not impact their retirement or organ-donation decision were “very” or “completely” confident in their answer.

The authors cite another study that reaffirms how confident people are in their incorrect responses. “They showed participants two female faces and asked which face was more attractive. After the participant pointed to a face, the researcher secretly swapped photos to the one they didn’t choose (think David Cooperfield). Then, the participant was asked to explain why they chose the face (which they didn’t actually pick). A majority of participants didn’t even notice the face swap. And furthermore, they went on to explain in great detail why they preferred that face. Most participants made up the reasons—good reasons—for liking a face that they didn’t actually choose. Only a third of people realized they had been tricked.”

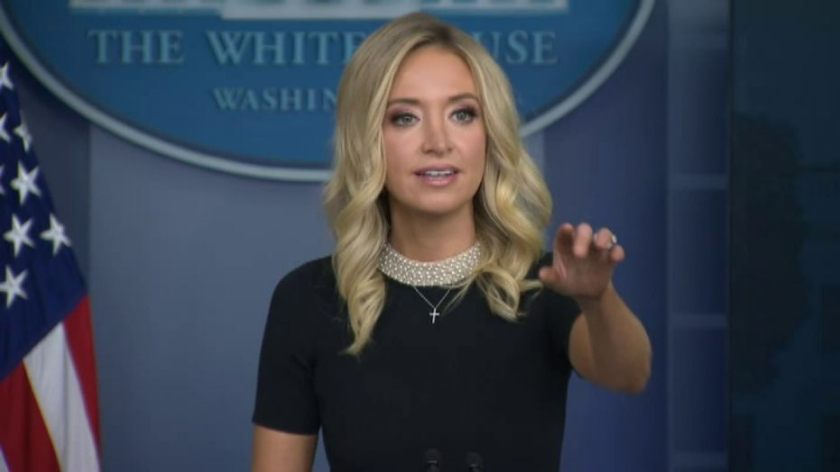

Why it happens, ask Kayleigh McEnany

Given that people are not intentionally trying to be dishonest, you can ask why they are so inaccurate predicting and understanding their own behavior. Researchers have given this occurrence the name the Internal Press Secretary. Berman and John explain, “[t]he brain’s Press Secretary must, like a president or prime ministers’ press secretary, explain our actions to other people. “I don’t know” is simply not an acceptable answer. Many times the brain’s Press Secretary is correct, but other times, it’s just guessing, piecing together clues in order to give a plausible answer. The tricky thing is, we don’t know when our Press Secretary is using facts and when it’s just guessing.”

Don’t ask, test

Given the challenges people face understand and explaining the actions they will take, product managers and designers need to find alternatives to traditional market research. One of the most powerful is to test what people will react to different choices. This testing can take the form of an ABn or multi-armed bandit test, where you present the options to a test and control group. It can be a preference test where you show customer or potential customers different concepts and see which one they are most likely to purchase or would spend the most for.

You can also look at responses in similar circumstances. How did a similar product or service perform? Did competitors launch comparable features? I often find that looking at adjacent or even completely different industries helps understand people’s true preferences. The key is using one or multiple tools that show actual decision making rather than relying on what customers believe is their preference.

Key takeaways

- A sole reliance on customer input and feedback, traditional market research, is built on a model of human decision making that assumes humans are rational, while in practice we are not.

- Not only do people provide a response inconsistent with their actions, they often do not understand the underlying causes of their behavior.

- Use one or multiple tools that show actual decision making, such as ABn testing or looking at reactions to similar initiatives in adjacent industries, rather than relying on what customers believe is their preference.

4 thoughts on “The risks of market research”