While AB testing is an integral element of mobile and social game development (as well development of most digital products), in many situations there is a better option. Several years ago, I had the opportunity to serve as an advisor to a company that had some brilliant people. Their CTO was a strong advocate of using multi-armed bandit testing as a superior alternative to AB testing. Multi-armed bandit testing is not new, there was a popular post in 2012 (http://stevehanov.ca/blog/index.php?id=132), and it is used by Google and other tech giants, but people (especially product managers) still regularly default to traditional ABn testing.

The problem with AB testing is that you leave money and performance on the table. Until the test is over, the poorer performing variant(s) will always get a significant share of your traffic. With the multi-armed bandit approach, you allocate increasingly less traffic to poorly performing variants.

What is multi-armed bandit testing

A multi-armed bandit approach allows you to dynamically allocate traffic to variations that are performing well while allocating less and less traffic to underperforming variations. Instead of two distinct periods of pure exploration and pure exploitation, bandit tests are adaptive, and simultaneously include exploration and exploitation. As Optimizely wrote recently, ” multi-armed bandit optimizations aim to maximize performance of your primary metric across all your variations. They do this by dynamically re-allocating traffic to whichever variation is currently performing best. This will help you extract as much value as possible from the leading variation during the experiment lifecycle, so you avoid the opportunity cost of showing sub-optimal experiences.”

Multi-armed bandit testing is a Bayesian approach to AB testing. As Shawn Lu writes in a post titled Beyond A/B testing, “The foundation of the multi-armed bandit experiment is Bayesian updating. Each treatment (called “arm”) has a probability of success, which is modeled as a Bernoulli process. The probability of success is unknown, and is modeled by a Beta distribution. As the experiment continues, each arm receives user traffic, and the Beta distribution is updated accordingly.”

A recap on ABn testing

To compare bandit testing with ABn testing (AB is with two variants, a test and control, n allows for additional variables), let’s quickly recap how AB testing works. Alex Atkins summarizes it succinctly, writing “in statistical terms, a/b testing consists of a short period of pure exploration, where you’re randomly assigning equal numbers of users to Version A and Version B. It then jumps into a long period of pure exploitation, where you send 100% of your users to the more successful version of your site.”

Benefits of multi-armed bandit testing

Bandit algorithms try to minimize opportunity costs and regret (the difference between your actual return and the return you would have collected had you deployed the optimal options at every opportunity). Rather than letting an AB test run until it is statistically significant, a bandit test moves subjects into the best performing group faster, allowing you to capture more gains. Matt Gershoff writes, ““Some like to call it earning while learning. You need to both learn in order to figure out what works and what doesn’t, but to earn; you take advantage of what you have learned. This is what I really like about the Bandit way of looking at the problem, it highlights that collecting data has a real cost, in terms of opportunities lost.”

A related advantage of multi-armed bandit testing is you make fewer mistakes. An A/B test will always send a significant portion of traffic to the sub-optimal group.

Also, as Shawn Lu writes, “[an] advantage of bandit experiment is that it terminates earlier than A/B test because it requires much smaller sample. In a two-armed experiment with click-through rate 4% and 5%, traditional A/B testing requires 11,165 in each treatment group at 95% significance level. With 100 users a day, the experiment will take 223 days. In the bandit experiment, however, simulation ended after 31 days, at the above termination criterion.” if the treatment group is clearly superior, we still have to spend lots of traffic on the control group, in order to obtain statistical significance.”

Finally, while not mathematically an advantage, bandit testing relieves the pressure to end a test too early. With ABn testing, frequently you will see one option perform better “directionally” and decide, or be forced to decide, to terminate the test and move everyone to the higher performing bucket before you get significant results. Unfortunately, this sometimes leads to picking an option that would be reversed once there is more data.

Why multi-armed bandit is not always the correct approach

The value of bandit testing does not mean you should abandon completely ABn testing. In Lu’s post, he writes “the convenience of smaller sample size comes at a cost of a larger false positive rate.” That is, you end up sometimes gravitating to the sub-optimal solution.

Alex Atkins also writes, “in essence, there shouldn’t be an ‘a/b testing vs. bandit testing, which is better?’ debate, because it’s comparing apples to oranges. These two methodologies serve two different needs.”

A/B testing is a better option when the company has large enough user base, when it’s important to control for type I error (false positives), and when there are few enough variants that we can test each one of them against the control group one at a time.”

The Bandit Option

While multi-armed bandit testing is not always a better option than ABn testing, you should look closely at using bandit testing when possible. It can reduce the opportunity cost of your testing and relieve pressure to terminate tests prematurely.

Key takeaways

- While AB testing is the most common method of optimizing between alternatives, in many situations the multi-armed bandit approach is optimal.

- A multi-armed bandit approach allows you to dynamically allocate traffic to variations that are performing well while allocating less and less traffic to underperforming variations.

- Multi-armed bandit testing reduces regret (the loss pursing multiple options rather than the best option), is faster and lowers the risk of pressure to end the test prematurely.

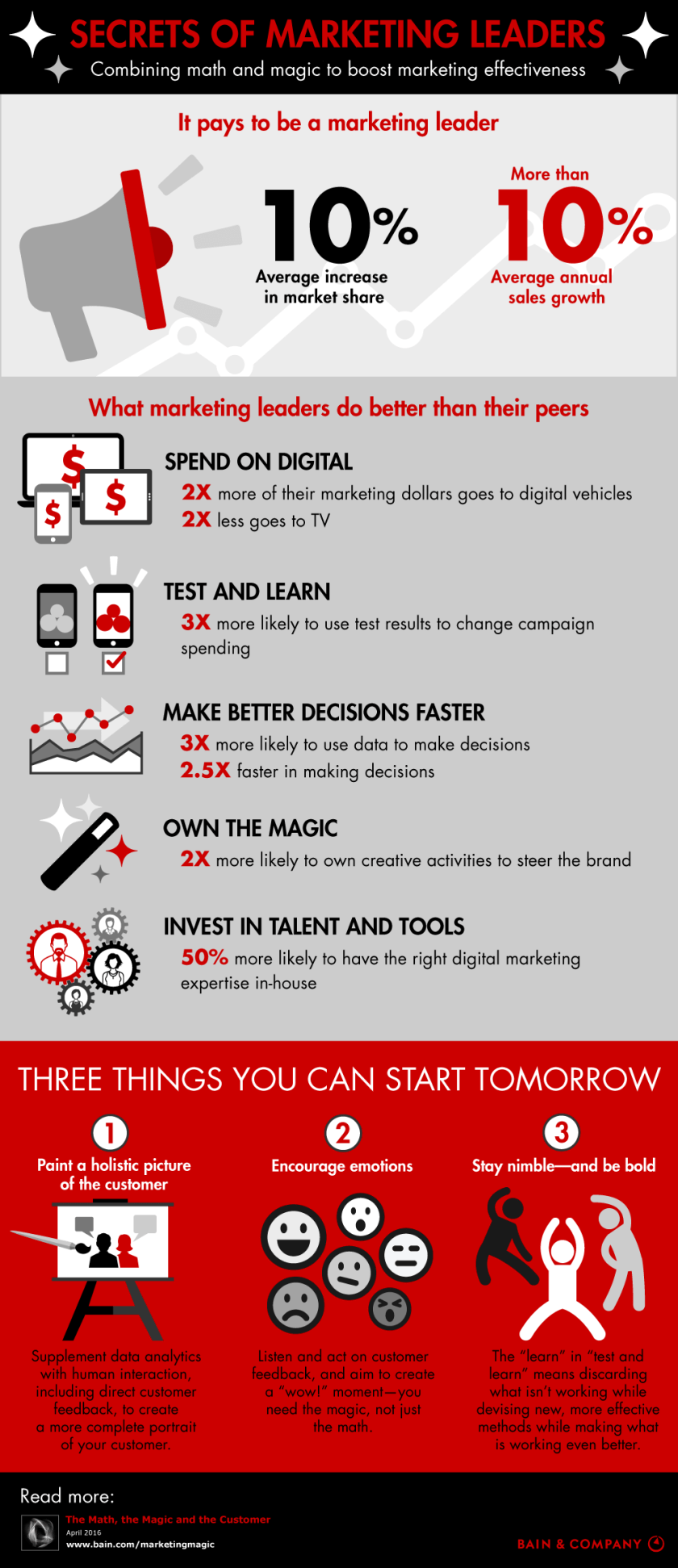

The big buzz phrase in the Bay Area the last year or so has been “growth hacking,” and the ideas behind it can help significantly game companies. The underlying principle in the phrase is that modern start-ups should be focused on using the new tools available via technology to grow rapidly their user base rather than relying on older, sometimes outdated, marketing techniques. Growth—unlike marketing—usually encompasses multiple aspects of an organization, with the growth team not only bringing in users but also working with the product team to optimize the product for growth. It stresses the importance of product to growth and how the two should work together rather than having marketing set aside in a corner. The phrase itself was coined by

The big buzz phrase in the Bay Area the last year or so has been “growth hacking,” and the ideas behind it can help significantly game companies. The underlying principle in the phrase is that modern start-ups should be focused on using the new tools available via technology to grow rapidly their user base rather than relying on older, sometimes outdated, marketing techniques. Growth—unlike marketing—usually encompasses multiple aspects of an organization, with the growth team not only bringing in users but also working with the product team to optimize the product for growth. It stresses the importance of product to growth and how the two should work together rather than having marketing set aside in a corner. The phrase itself was coined by